Prompt Injection and Malware Pattern Detection

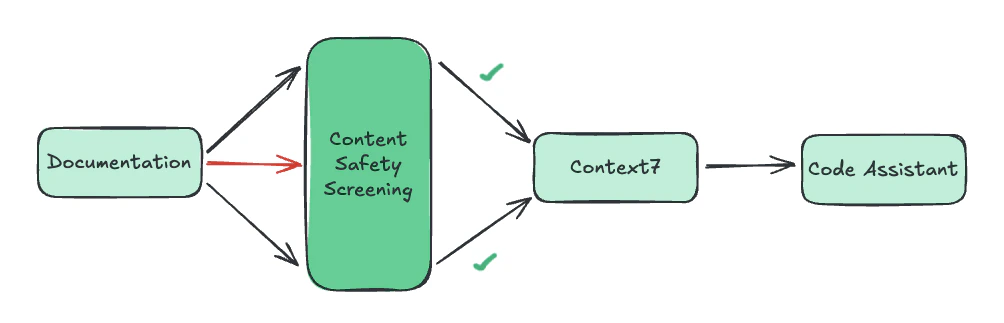

Context7 indexes documentation from public and private sources. To prevent malicious content from reaching AI assistants, Context7 employs a layered malicious content detection system.

- Robust Detection — Content is analyzed using a classifier tailored for Context7 to identify prompt injection attempts and malware-related patterns

- Targeted Validation — Suspicious content is subjected to additional checks

- Skills.md Handling — A separate but related detection pipeline is used for Skills files, adapted to their distinct structure and intent

- Continuous Monitoring — Flagged content is tracked and reviewed on an ongoing basis

- Regular Updates — Detection logic is updated to address evolving attack methods and new injection techniques